Unlocking AI innovation through automated governance

As AI systems scale across Deliveroo, we built a unified infrastructure that embeds governance directly into our platform — enabling engineers to move fast while staying compliant. Here’s how our AI Hub and Governance as Code approach make innovation and oversight work together.

Over the past two years, we’ve witnessed a Cambrian explosion of AI development, with Generative and Agentic AI capturing stakeholders’ attention. AI/ML Engineers are delivering real business value by intermixing LLMs and agentic systems with traditional machine learning systems. It’s been an era of rapid prototyping and quick integrations. Teams have adopted different approaches - each unlocking new potential, but also introducing complexity. As these prototypes evolved into production systems, duplicated effort and inefficiencies began to show. Teams built similar observability and reporting solutions in parallel, governance efforts between engineering and legal teams rapidly increased in complexity, and compliance processes developed as bespoke solutions on a per-team basis.

We realised that this complexity was limiting our efforts to scale AI projects across the company. To move fast and stay compliant, we needed consistent governance, shared tooling, and clear accountability across teams. We therefore built the components of our AI ecosystem around a central infrastructure - designed for both innovation and oversight. Built upon the principles of automation and transparency, this approach enables our engineers to build safer systems, follow streamlined governance processes, and ultimately ship features faster.

Introducing our AI Agent Platform and AI Hub

Our in-house AI Agent Platform deserves a blog post of its own, so we will keep it short for now. It is our foundation for developing, deploying, and operating agentic AI systems at Deliveroo. It includes MCP server integrations, handles workflow orchestration, abstracts over different model providers and includes an evaluation SDK for both offline and online eval pipelines.

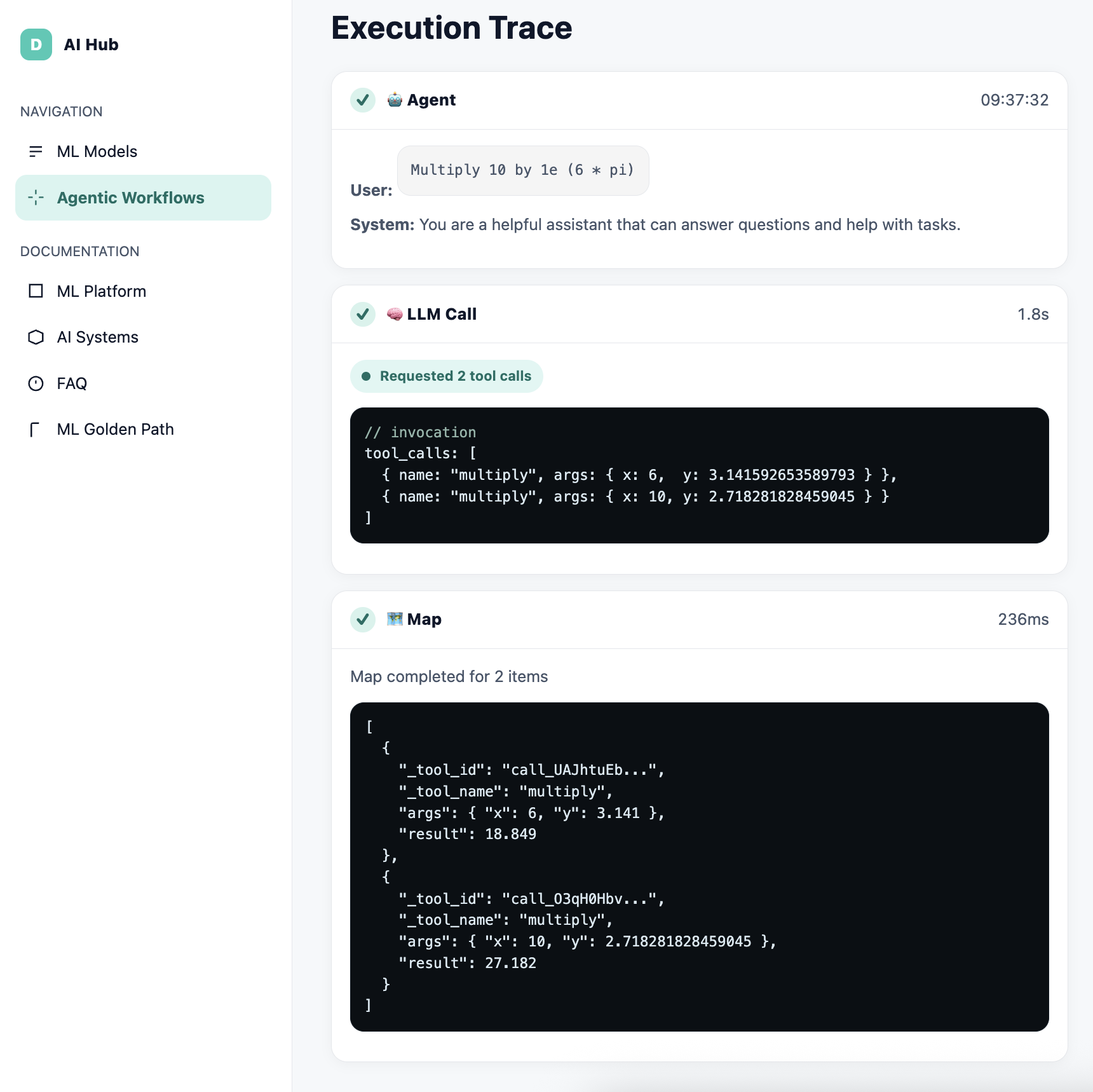

Our AI Agent Platform also gives our engineers full AI agent tracing out of the box, with data storage and user interface components for visualisation. Teams can configure their own data retention policies for these traces to comply with national and use case-specific regulation. Given the stochastic nature of generative AI, comprehensive tracing is essential for observability, debugging, and system reliability.

One of the ways that these traces can be accessed is through a web UI called the AI Hub. Both technical and non-technical stakeholders can access trace data in an intuitive way, improving transparency and accountability across the organisation. Of course, access controls are in place to ensure that sensitive trace data is accessible only to authorised users.

Agentic systems pose unique tracing challenges compared to traditional chatbots or single-turn LLM applications. Instead of linear request–response interactions, agents often operate across multiple steps, sometimes running in parallel or recursively invoking other agents. To manage this complexity, the AI Hub shows agent execution as a lineage or DAG (Directed Acyclic Graph). Each step in the execution chain can be visualised and queried using XML-style access notation, allowing developers to easily navigate and interpret even the most complex multi-agent traces.

AI Inventory

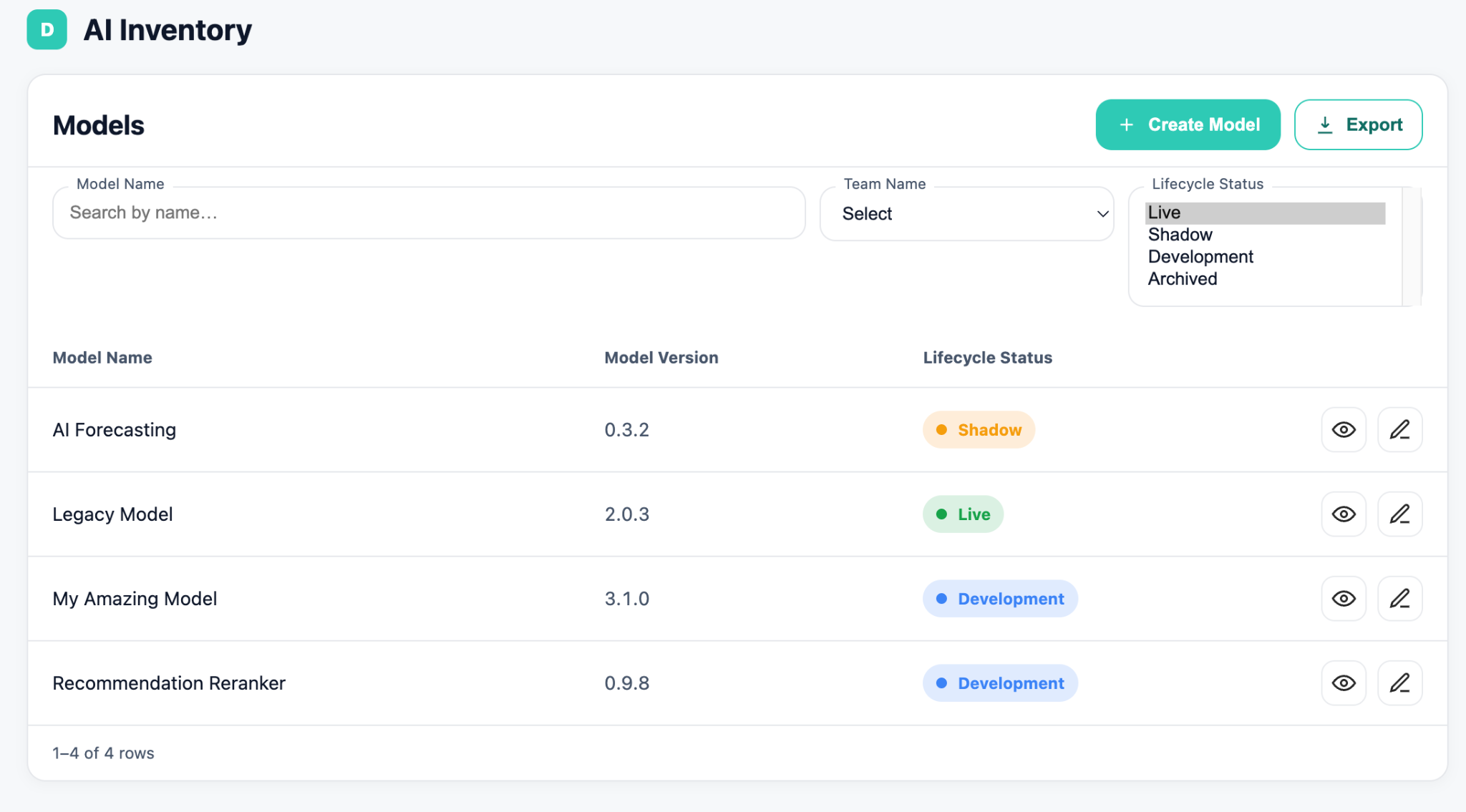

In addition to enhancing developer experience by visualising agentic workflows and traces, the AI Hub also hosts our AI inventory. An AI Inventory is a record of all AI systems, agents and models deployed across the business. By hosting our inventory in the AI Hub, we are able to automatically pull operational metrics from platform infrastructure. Operational metrics, such as data lineage and pipeline failure rates, are not only useful for engineers who are focused on delivery velocity. Making these metrics available to compliance and legal functions help to promote AI transparency, which results in higher quality risk assessments. Furthermore, the AI Hub allows these risk assessments for each model to be recorded directly into our AI inventory. This helps maintain human oversight over the development and operation of AI, which is fundamental to Deliveroo’s commitment to responsible AI.

AI Governance as Code

Governance as Code (GaC) is a governance strategy that automates policies and practices rather than relying on manual audits and reporting. Given the scale of Deliveroo’s AI offering, an automation-first approach to AI governance provides greater confidence in and oversight over our risk mitigation measures. In short, GaC turns static AI policies into a dynamic practice that is enforceable throughout the development lifecycle.

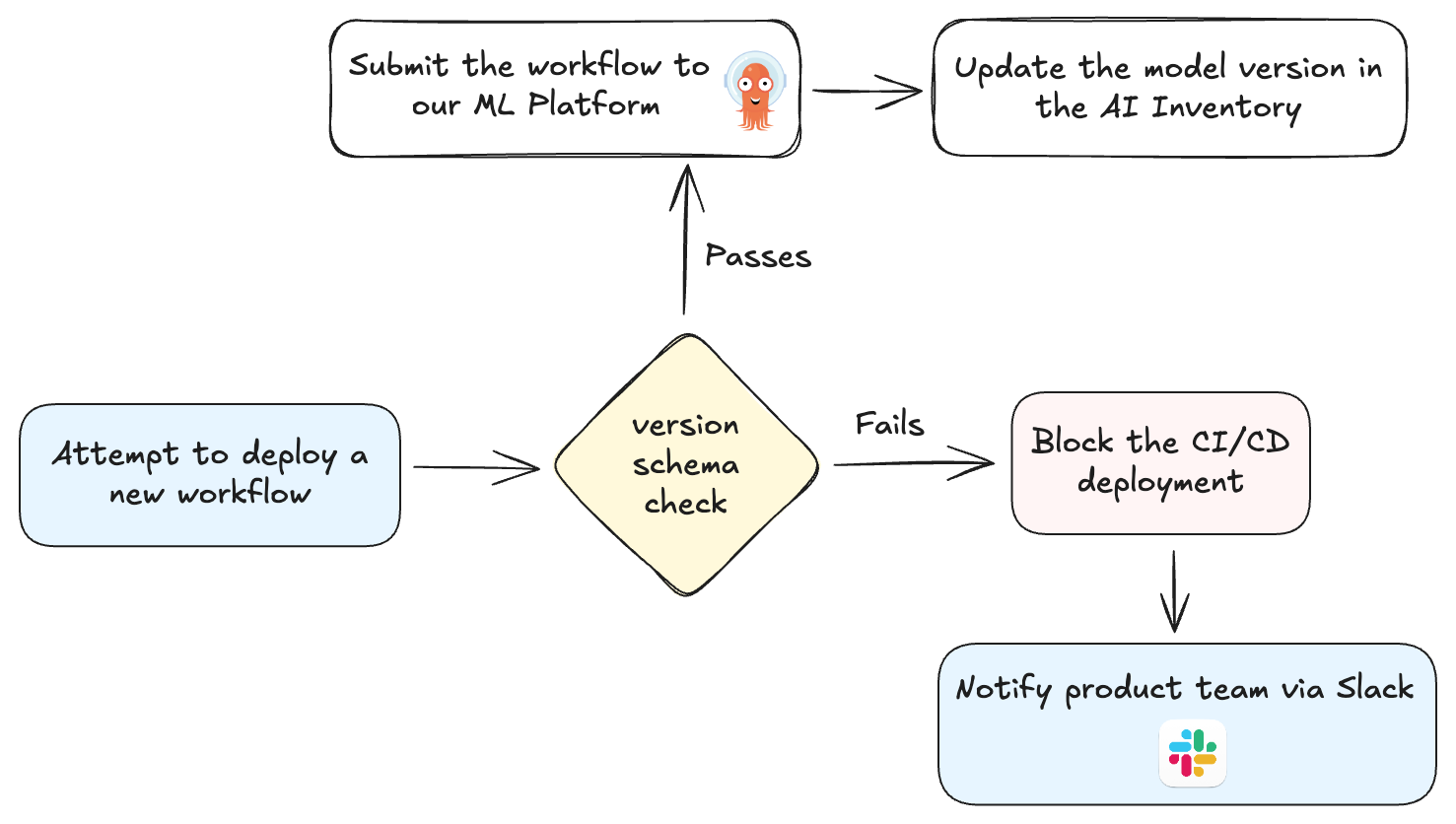

One example of how this works in practice is our implementation of a standardised AI model versioning policy. Standardised versioning clarifies model lineage and promotes a common language across our AI/ML estate. Such a policy supports AI safety by allowing engineers to easily compare versions, roll back problematic models and assist peers in other teams during live incidents. Metadata on the AI Hub provides proof of teams’ adherence to the policy.

We are currently working on enforcing compliance with this policy through MLOps. In practice, this means that we can temporarily block non-conforming models from deploying to our staging platform infrastructure, thereby enforcing governance through code. A further benefit of standardising the way that we version our models is that we automatically update our AI inventory to include the latest model versions that have been deployed.

Policy Datasets

Many aspects of AI evaluation share common foundations across Deliveroo’s three-sided marketplace. Hence, AI agents deployed across the business have similar evaluation and governance needs. For example, we operate customer chat agents that must respond in a defined company tone - professional, empathetic, and unbiased, without being overtly deferential. Since this company tone policy is required across all partner and rider-facing agents as well, we can develop a common dataset that benchmarks our performance against our tone policy across all AI agents.

By standardising policies into datasets, we enable downstream agentic AI models to reference and apply these standards automatically during training, testing, or runtime evaluation. This shift moves compliance from being a manual, meeting-heavy process to an integrated, automated practice embedded directly into the development pipeline. We believe this approach will accelerate AI development and reduce the operational overhead of ensuring compliance. Instead of manual legal reviews for every project, legal and privacy teams can contribute directly to policy datasets that are versioned, reviewed, and reused across the platform. This collaboration transforms compliance into a scalable, developer-friendly mechanism for responsible AI development - one that strengthens governance and encourages innovation.

What’s the big picture?

Most companies think that innovation and governance are at odds with one another. At Deliveroo, we have built a unified AI infrastructure that enhances both. Our AI Hub serves as a one-stop-shop for AI governance – from evaluating AI Agents to streamlining AI governance activities in a centralised platform.

By embedding governance directly into our platform infrastructure, we remove friction from the development process rather than adding to it. Engineers can deploy and iterate faster. Built-in discoverability, tracing, and evaluation ensure that safety and compliance are addressed by design. This approach allows us to scale AI systems with confidence. Automation reduces duplicated effort, provides continuous oversight, and shortens time to market.